“If you’re not world-class at integrations, and your whole product’s dependent on that, you’re screwed. So, one big piece is we had to get great at that, not just good at it, and every day we push that ball forward.” – Hiten Shah

If your product is mature, it should be quite obvious which actions and workflows matter more.

Since these key flows and actions are responsible for the value that users get from your product, you need to make sure that they’re as polished as they can be.

There are three main ways to assess tasks:

- Success: Can users achieve their goals with your product? Can they complete their tasks?

- Time: How much time does it take an average user to complete the task? Time is often viewed as a proxy for ease, but it isn’t always.

- Perceived ease of use: How hard is it to complete the task? Ease is completely subjective. It can vary depending on users’ backgrounds and experience.

As a general rule, you can consider a task to have been a ‘direct success’ if the user completed it using the expected flow, an ‘indirect success’ if the user completed it using alternate flows, and a ‘failure’ if the user was unable to complete it.

According to research by Dr. Jeff Sauro, the average task completion rate across products is 78%. This means that, across products and experiences, 22% of tasks fail.

Many user testing platforms like UserTesting, Usabilla, or Maze can help you measure task time and success.

Although both measures are useful, perceived ease of use can give you an important benchmark to iterate against.

The Single Ease Question (SEQ) Questionnaire

The Single Ease Question (SEQ) questionnaire is the best tool to evaluate ease of use. It’s a single question questionnaire, generally administered right after a task to evaluate a user’s feeling toward the task they just attempted. It asks:

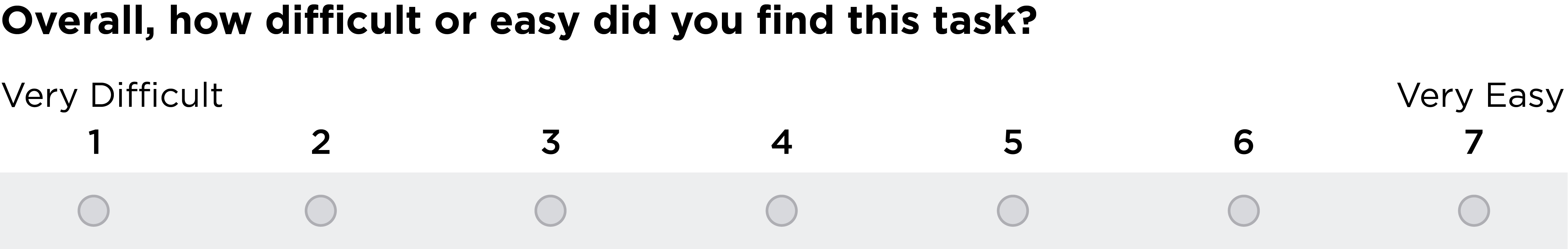

“Overall, how difficult or easy was the task to complete?”

A seven-point scale—labelled at the end points—makes user input quick and easy:

Post-task questionnaires should be short—three questions at most—to avoid breaking your user’s flow.

In spite of the simplicity of the questionnaire, responses are strongly correlated with other usability metrics like the System Usability Scale (SUS).

The Single Ease Question score for a task is the average responses of the respondents. The average SEQ score across tasks is 5.5.

Scores can be broken down by segments, lifetime value, or any other user attributes.

Evaluating different tasks will help you identify the weakest parts of your product. Benchmarking the scores over time will help you track progress across iterations.

Since the SEQ questionnaire is not a good diagnostic tool, and users have difficulty separating the complexity of completing a task from the problems they experience while completing it, it’s a good idea to ask a follow up question:

“What is the primary reason for your score?”

The reasons for scores below five (out of seven) will help point out issues and friction points in your product’s workflows.

Alternatively, customer experience expert Karl Gilis recommends asking “How difficult was it to [ Task ]?”

Although this open-ended question will generate lower scores than SEQ questionnaires, the answers will be a treasure trove of insights.

You can expand your research by targeting users who have just completed key tasks in your product with automated In-App messages. If you do, consider tracking the evolution of scores over time.

– –

This post in an excerpt from Solving Product. If you enjoyed the content, you'll love the new book. You can download the first 3 chapters here →.