“If you want to be successful as a company, the culture should be of experimentation. Experimentation, to be successful, should be based on user insights.” – Karl Gilis, AGConsult Partner

It takes a certain level of maturity to run effective product and growth experiments.

To avoid shipping experiments for the sake of shipping experiments, teams need to focus on delivering outcomes. They also need to be willing to embrace failure to make progress.

On average, 80% of experiments fail to deliver the expected outcomes, but with the right method, 100% of experiments can help you learn and progress.

With the right mindset, experiments can help teams to make increasingly better decisions.

To create this mindset, assemble a multidisciplinary team—often a marketer, a product manager, a data analyst, and engineers—and let them work out their own process.

Unless your organization is entirely focused on growing through experimentation, it’s a good idea to split off the growth team to avoid competition between backlogs.

You should focus on one or two core goals at a time, aligning with your North Star metric or the AARRR steps that you’re focused on. Your goals should be big, your experiments small and nimble.

Once you know what you’re trying to achieve, you need to establish a high-tempo cadence of testing.

Since the average growth experiment across organizations has a one in five chance of working, the more quality experiments your team is able to run, the faster you’ll learn, and hopefully, grow.

Sean Ellis’ Process for Rapid Product and Growth Experiments

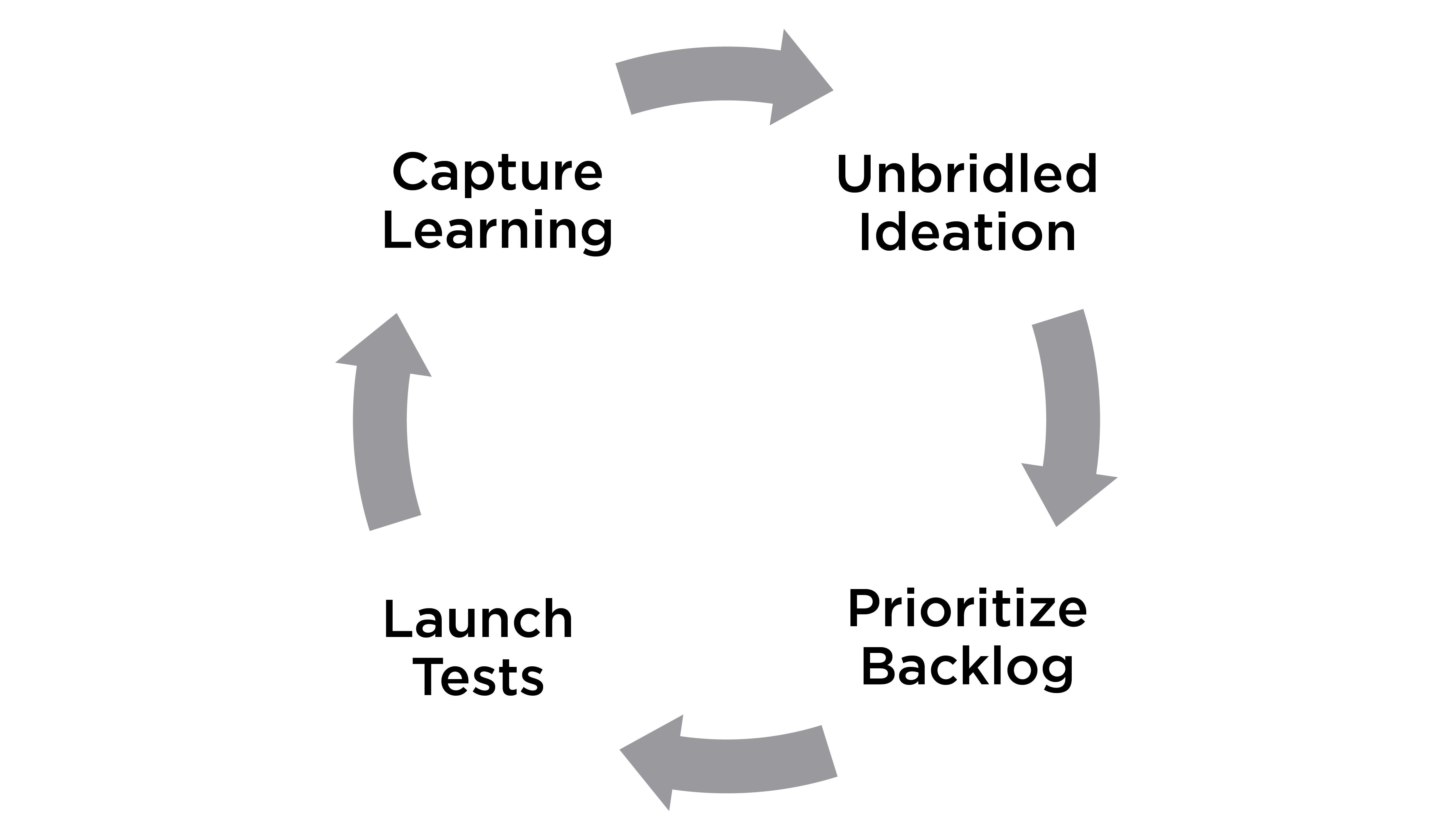

But there’s a method to creating this velocity. Sean Ellis, recommends following a four-step process:

1) Unbridled ideation: To create inclusivity within the team, generate better ideas, and always have a large pool of experiments to pick from, it’s a good idea to solicit opportunities from the team, but also the entire organization. Testing many concepts helps reduce politics, and makes team members feel like they’re part of the process. Transparently sharing experiment results and holding team members accountable for performance reinforces objectivity.

2) Backlog prioritization: Ellis created the I.C.E. score to help rank ideas. In his model, each person within the team assigns a ranking from one to 10 along three criteria:

- Impact: If the experiment is successful, what’s the potential impact? More revenue? More sign-ups? More referrals? Impact always needs to be in relation to the goal of the experiment.

- Confidence: What’s the likelihood that the experiment delivers the expected impact? What evidence do we have to prove this?

- Ease: How easy is it to run the experiment? Will it require a lot engineering resources? Or is it a simple A/B test of marketing copy?

Some organizations will want to change the relative weight of each of these criteria based on their own growth theses. Growth expert Guillaume Cabane recommends taking prioritization a step further by converting impact projections into dollar amounts. Using Guillaume’s approach, teams are able to make revenue growth projections for their organizations.

Each experiment’s I.C.E. score can be used to guide prioritization. Hosting a weekly growth meeting will increase agility and help set a regular cadence for the team.

Running Experiments

3) Test launch: During the week, teams take time to brainstorm and dive deeper into the experiments that they have committed to implementing.

If you’re starting out, start small by running one or two experiments a week. As you learn and iron out your experimentation processes, gradually increase the number of experiments that your team runs, and how ambitious the experiments are. Starting with a good backlog will allow your team to build their experimentation muscles before they begin to increase test velocity.

Don’t let past experiments blind you. Things change. There are hundreds of reasons why something didn’t work in the past. Revisit old hypotheses, tweak their variables, and rerun the experiments when it makes sense.

4) Operationalizing learnings: Lastly—and this step is the one teams often overlook—the team has to learn from every experiment it runs.

What was the goal? Why did it work/not work? What can be learned from the experiment?

Involve the whole team in the analysis. Talk through the results and assumptions made. A lot of times, the tests that didn’t work lead to the biggest breakthroughs.

Making experiment results transparent and accessible helps to keep everyone in sync. It also ensures that your experimenting process remains focused on your goals, not on running series of experiments.

– –

This post in an excerpt from Solving Product. If you enjoyed the content, you'll love the new book. You can download the first 3 chapters here →.